Laboratory Activty 2#

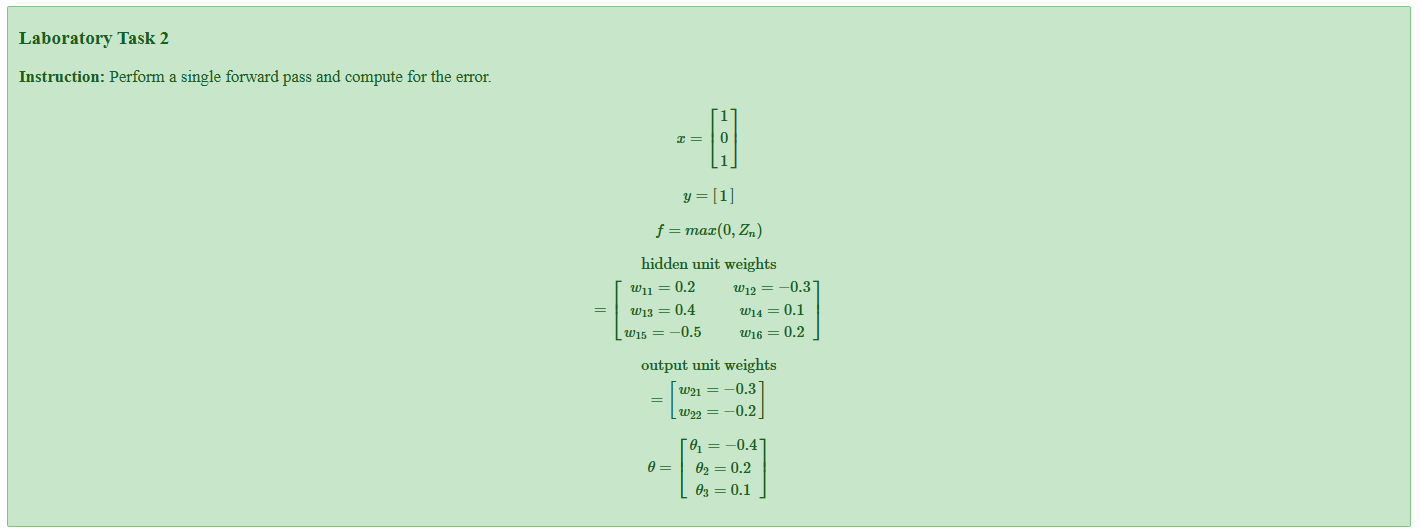

Laboratory Task 2#

Define Inputs and Weights#

# Define inputs and weights (given values)

import numpy as np

from math import exp

print("Define inputs and weights (as given in the problem)\n")

# Input and target

x = np.array([1, 0, 1]) # input vector (3,)

y = 1 # desired target (scalar)

# Hidden-unit weight matrix (3 inputs -> 2 hidden units)

# Interpreting the matrix as rows = inputs, cols = hidden units:

# [[w11, w12],

# [w13, w14],

# [w15, w16]]

W_hidden = np.array([

[0.2, -0.3],

[0.4, 0.1],

[-0.5, 0.2]

])

# Output-layer parameters (interpreting θ = [bias, w_h1, w_h2])

theta = np.array([-0.4, 0.2, 0.1])

# The problem statement also listed "output unit weights = [w21=-0.3, w22=-0.2]".

# To avoid ambiguity we will use the provided θ vector for the final output computation

# (θ = [bias, weight_hidden1, weight_hidden2]) because it clearly includes a bias term.

print("x =", x)

print("y =", y)

print("W_hidden =\n", W_hidden)

print("theta (output parameters) =", theta)

Define inputs and weights (as given in the problem)

x = [1 0 1]

y = 1

W_hidden =

[[ 0.2 -0.3]

[ 0.4 0.1]

[-0.5 0.2]]

theta (output parameters) = [-0.4 0.2 0.1]

In this step, we define all the given values from the problem:

x represents the input vector

[1, 0, 1].y is the target output, which equals

1.W_hidden is the weight matrix connecting the input layer to the hidden layer. It has two hidden neurons.

θ (theta) contains the bias and weights for the output unit, represented as

[bias, w_h1, w_h2] = [-0.4, 0.2, 0.1].

These parameters will be used throughout the forward pass to calculate activations and final output.

They define how information flows from inputs through the hidden layer to the output.

Apply ReLU Activation#

# Apply ReLU activation to hidden units: a_hidden = max(0, z_hidden)

print("Apply ReLU to get hidden activations (a_hidden)\n")

a_hidden = np.maximum(0, z_hidden)

print("a_hidden =", a_hidden) # expected [0.0, 0.0]

Apply ReLU to get hidden activations (a_hidden)

a_hidden = [0. 0.]

The ReLU (Rectified Linear Unit) activation function is defined as:

[ f(z) = \max(0, z) ]

Applying it to each pre-activation value:

[ a_{hidden} = \max(0, [-0.3, -0.1]) = [0, 0] ]

Because both pre-activation values were negative, the output becomes 0 for both hidden neurons.

This means neither neuron is “activated” — they both output zero to the next layer.

Output Pre-activation#

# Compute output pre-activation (z_out) using θ = [bias, w_h1, w_h2]

print("Output pre-activation (z_out) using θ = [bias, w_h1, w_h2]\n")

bias = theta[0]

w_h1 = theta[1]

w_h2 = theta[2]

z_out = bias + w_h1 * a_hidden[0] + w_h2 * a_hidden[1]

print(f"bias = {bias}, w_h1 = {w_h1}, w_h2 = {w_h2}")

print("z_out =", z_out) # with a_hidden=[0,0] this equals bias (-0.4)

Output pre-activation (z_out) using θ = [bias, w_h1, w_h2]

bias = -0.4, w_h1 = 0.2, w_h2 = 0.1

z_out = -0.4

Now, we calculate the output neuron’s pre-activation value using the output weights and bias:

[ z_{out} = \theta_0 + \theta_1 a_{h1} + \theta_2 a_{h2} ]

Substituting the values:

[ z_{out} = (-0.4) + (0.2)(0) + (0.1)(0) = -0.4 ]

This is the raw (unactivated) output before applying any final activation function.

Because all hidden activations were zero, only the bias influences the result.

Prediction (ŷ)#

# Prediction (ŷ) — assume identity (linear) output activation

print("Prediction (ŷ) using identity output activation\n")

y_hat = z_out

print("y_hat =", y_hat)

Prediction (ŷ) using identity output activation

y_hat = -0.4

In this case, the output layer uses an identity activation function, so the predicted value is simply:

[ \hat{y} = z_{out} = -0.4 ]

This represents the model’s final output prediction.

Since the target output ( y = 1 ), we can already expect some error between prediction and truth.

Compute Error#

# Compute error (squared error E = 1/2 * (y - ŷ)^2)

print("Compute squared error (E = 0.5 * (y - ŷ)^2)\n")

error = 0.5 * (y - y_hat)**2

print("error =", error) # numeric value

print("\n-- End --\n")

# For completeness: show what would happen if we applied a sigmoid output activation

def sigmoid(z): return 1.0 / (1.0 + np.exp(-z))

z_out_sig = z_out

y_prob = sigmoid(z_out_sig)

mse_sig = 0.5 * (y - y_prob)**2

# cross-entropy loss for label y=1: -log(y_prob)

ce_sig = -np.log(y_prob)

print("Extra (not required but informative):")

print(" Sigmoid(output) =", y_prob)

print(" Squared error with sigmoid output =", mse_sig)

print(" Cross-entropy loss (y=1) =", ce_sig)

Compute squared error (E = 0.5 * (y - ŷ)^2)

error = 0.9799999999999999

-- End --

Extra (not required but informative):

Sigmoid(output) = 0.401312339887548

Squared error with sigmoid output = 0.17921345718546142

Cross-entropy loss (y=1) = 0.9130152523999526

We use the Mean Squared Error (MSE) loss function, defined as:

[ E = \frac{1}{2}(y - \hat{y})^2 ]

Substituting values:

[ E = 0.5(1 - (-0.4))^2 = 0.5(1.4)^2 = 0.98 ]

This error value (≈ 0.98) indicates the magnitude of difference between the model’s prediction and the true value.

A high error shows that the current weights are not well-optimized yet.